Integration of social and physical understanding

Authors: Shari Liu, Joseph Outa, Seda Karakose-Akbiyik

Authors: Shari Liu, Joseph Outa, Seda Karakose-Akbiyik

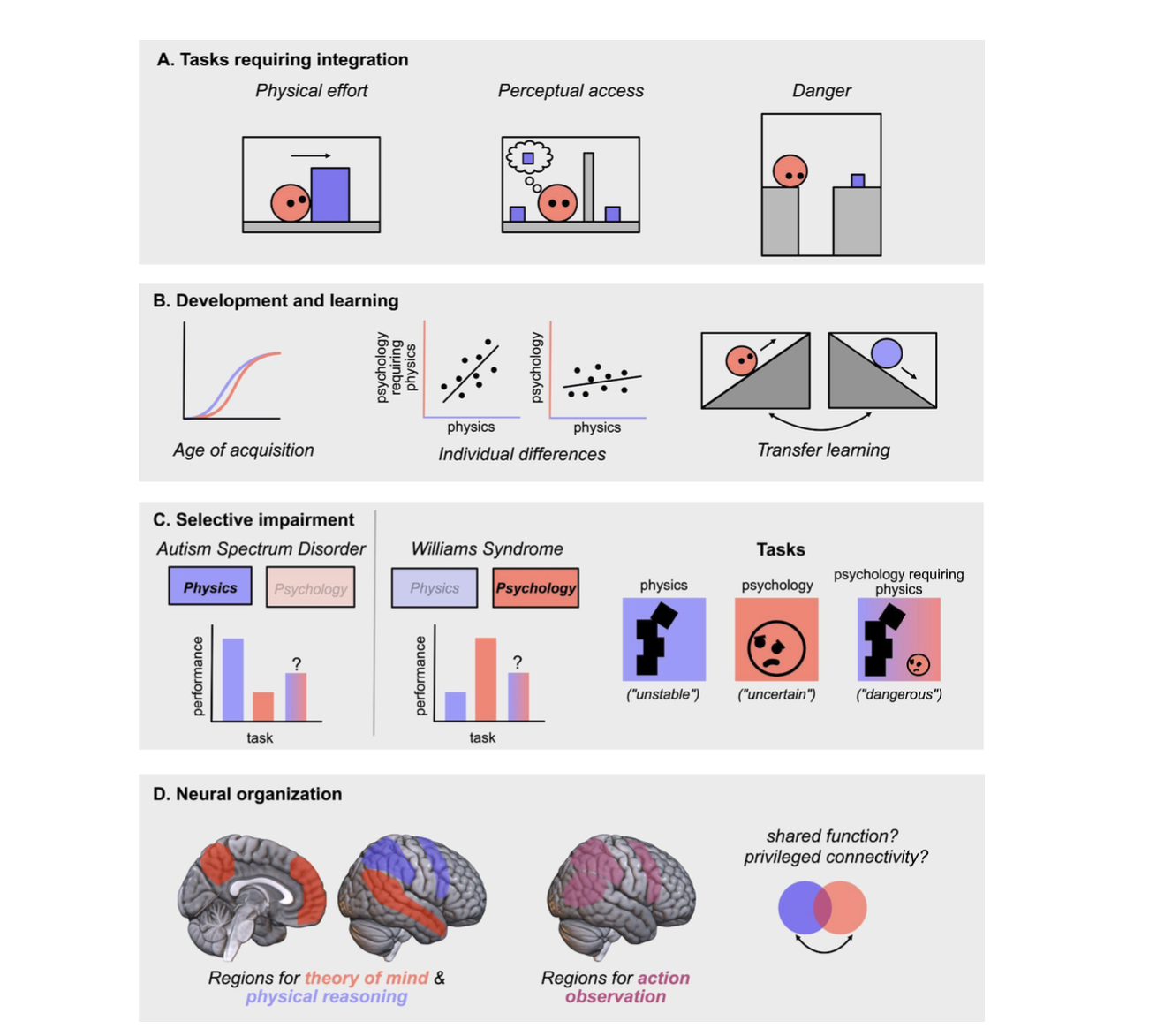

How do humans make sense of other people: entities who are simultaneously psychological beings and physical objects? Across the cognitive sciences, including psychology, anthropology, philosophy, and neuroscience, scholars have puzzled over the origins and structure of our understanding of the physical world on the one hand, and the psychological world on the other. Each of these research areas has accumulated evidence that these two domains of thought are independent, modular systems. We disagree. Starting in infancy, and indeed throughout our lives, our psychological reasoning (naive psychology) depends on inputs from physical reasoning (naive physics). In this paper, we first review evidence for the modularity hypothesis from developmental psychology, cross-cultural research, cognitive neuroscience, and neuropsychology, and argue that this work provides evidence for domain-specificity (that naive psychology and physics are conceptually distinct) but not for modularity (that these two abilities are informationally encapsulated from each other). To the contrary, we use evidence from each of these disciplines, and research from computational cognitive science, to argue that naive psychology and physics constitute parallel and integrated systems in human minds and brains. We end by laying out a research program to investigate this integration.

Liu, S., Outa, J., & Akbiyik, S. (Under Review). Naive psychology depends on naive physics. [https://doi.org/10.31234/osf.io/u6xdz]

Development of multi-modal inference

Authors: Joseph Outa, Xi Jia Zhou, Hyowon Gweon, Tobias Gerstenberg

Authors: Joseph Outa, Xi Jia Zhou, Hyowon Gweon, Tobias Gerstenberg

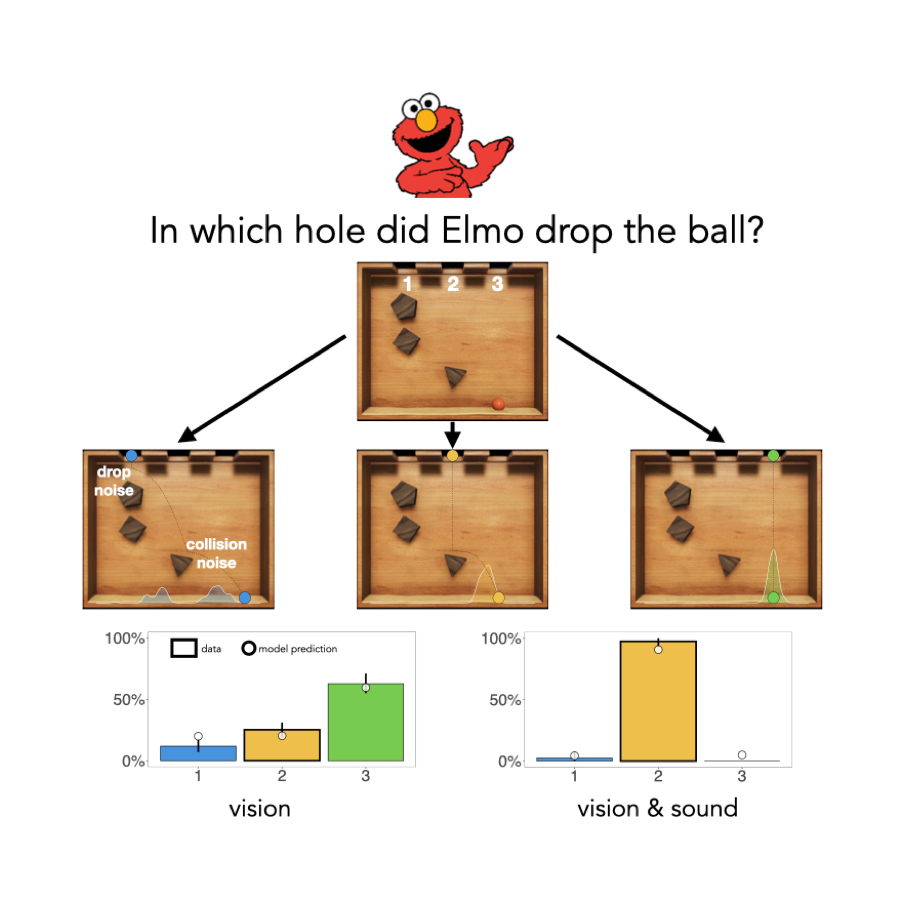

How does the ability to integrate multi-modal information develop in early childhood? We probe this using the plinko task, a simple and intuitive way to assess children’s physical reasoning based on multi-modal evidence. It consists of a box which is occluded while a ball is dropped into one of three holes, producing sounds as it hits the obstacles and lands in sand at the bottom. Participants are then asked to infer in which hole the ball was dropped. Prior work suggests adults solve it by using a mental simulation strategy that integrates the visual and auditory information. Our study finds that 3 to 5 year old children’s responses are more consistent with a matching strategy (choosing the hole that’s closest to where the ball landed), and they transition to using both unimodal and multimodal simulation strategies between 6 to 8 years of age.

Outa, J.*, Zhou, X. J.*, Gweon, H., & Gerstenberg, T. (2022). Stop, children what’s that sound? Multi-modal inference through mental simulation. Proceedings of the 44th Annual Conference of the Cognitive Science Society. Austin, TX: CSS. *Joint first authors. [Repository]

Reasoning about conversational AI agents

Authors: Griffin Dietz, Joseph Outa, James A. Landay, Hyowon Gweon

Authors: Griffin Dietz, Joseph Outa, James A. Landay, Hyowon Gweon

Conversational AI devices (i.e. smart speakers) are increasingly present in our lives and even used by children to ask questions, play, and learn. These entities not only blur the line between objects and agents - they are speakers (objects) that respond to speech and engage in conversations (agents) - but also operate differently from humans. Here we use a variant of a classic false-belief task to explore adults’ and children’s attributions of mental states to conversational AI versus human agents. While adults understood that two conversational AI devices, unlike two human agents, may share the same “beliefs”, 3- to 8-year-old children treated two conversational AI devices just like human agents; by 5 years of age, they expected the two devices to maintain separate beliefs rather than share the same belief, with hints of developmental change. Our results suggest that children initially rely on their understanding of agents to make sense of conversational AI.

Dietz, G., Outa, J., Lowe, L., Landay, J. A., & Gweon, H. (2023). Theory of AI Mind: How adults and children reason about the``mental states’‘of conversational AI.In Proceedings of the Annual Meeting of the Cognitive Science Society. (Vol. 45, No. 45). Austin, TX: CSS.